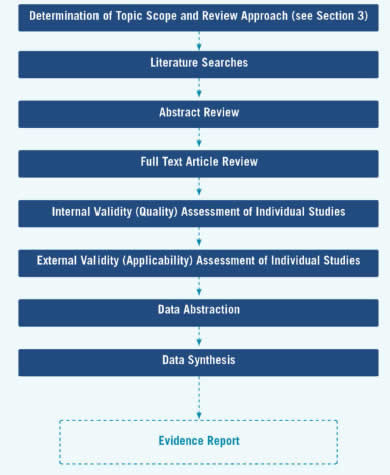

The evidence review development begins with finalization of topic scope, review approach, and research plan, as described above, and continues in the next stage with literature searches. The stages in the evidence review development are displayed in Figure 5.

Table of Contents

- 4.1 Literature Retrieval and Review of Abstracts and Articles

- 4.2 Internal and External Validity Assessment of Individual Studies

- 4.3 Data Abstraction

- 4.4 Data Synthesis

- 4.5 Other Issues in Assessing Evidence at the Individual Study Level

- 4.6 Incorporating Other Systematic Reviews in Task Force Reviews

- 4.7 Reaffirmation Evidence Update Process

4.1 Literature Retrieval and Review of Abstracts and Articles

4.1.1 Methods for Literature Searches

All literature searches are conducted using MEDLINE and the Cochrane Central Registry of Controlled Trials, using appropriate search terms to retrieve studies for all key questions that meet the inclusion/exclusion criteria for the topic. Other databases are included when indicated by the topic (e.g., PsycINFO for mental health topics). Searches are limited to articles published in the English language. For reviews to update recommendations, searches are conducted for literature published approximately 12 months prior to the last search of the previous review to the present. For new topics, the date range for the search is determined by the nature of the screening and treatment interventions for the topic, with longer time frames for well-established interventions that have not been the focus of recent research activity or topics with limited existing research and shorter time frames for topics with more recently developed interventions. The EPC review team supplements these searches with suggestions from experts and a review of reference lists from other relevant publications.

Search terms used for each key question, along with the yield associated with each term, are documented in an appendix of the final evidence review. A followup or "bridge" search to capture newly published data is conducted close to the time of completion of the draft evidence review, with the exact timing determined by the topic team.

4.1.2 Procedures for Abstract and Article Review

After literature searches are conducted, the EPC review team uses a two-stage process to determine whether identified literature is relevant to the key and contextual questions. This two-stage process is designed to minimize errors and to be efficient, transparent, and reproducible. First, titles and abstracts are reviewed independently by two reviewers by broadly applying a priori inclusion/exclusion criteria developed during the work plan stage of the review. When in doubt as to whether an article might meet the inclusion criteria, reviewers err on the side of inclusion so that an article is retrieved and can be reviewed in detail at the article stage. All citations are coded with an excluded or included code, which is managed in a database and used to guide the further literature review steps. Two reviewers then independently evaluate the full-text articles for all citations included at the title/abstract stage. Included articles receive codes to indicate the key question(s) for which they meet criteria and excluded articles are coded with the primary reason for exclusion, though additional reasons for exclusion may also apply.

4.1.3 Literature Database

For each systematic review, the EPC review team establishes a database of all articles located through searches and from other sources. The database is the source of the final literature flow diagram documenting the review process. Information captured in the database includes the source of the citation (e.g., search or outside source), whether the abstract was included or excluded, the key question(s) associated with each included abstract, whether the article was excluded (with primary reason for exclusion) or included in the review, and other coding approaches developed to support the specific review. For example, a hierarchical approach to answering a question may be proposed at the work plan stage, specifying that reviewers will consider a type of study design or a clinical setting only if research data are too sparse for the preferred type of study. While reviewing abstracts and articles, these can be coded to allow easy retrieval during the conduct of the review, if warranted.

4.2 Internal and External Validity Assessment of Individual Studies

By means of its analytic framework and key questions, the Task Force indicates what evidence is needed to make its recommendation. By setting explicit inclusion/exclusion criteria for the searches for each key question, the Task Force indicates what evidence it will consider admissible and applicable. The critical aspect used to determine whether an individual study is admissible is its internal and external validity with respect to the key question posed. This initial examination of the internal and external validity of individual studies is conducted by the EPC review team using the USPSTF criteria as a baseline and newer methods of quality assessment as appropriate (go to Appendix VI and Appendix VII for more detail on USPSTF criteria). Likewise, studies of interventions that require training or equipment not feasible in most primary care settings would be judged to have poor external validity and would not be admissible evidence.

4.2.1 Assessing Internal Validity (Quality) of Individual Studies

The Task Force recognizes that research design is an important component of the validity of the information in a study for the purpose of answering a key question. Although RCTs cannot answer all key questions, they are ideal for questions regarding benefits or harms of various interventions. Thus, for the key questions of benefits and harms, the Task Force currently uses the following hierarchy of research design:

I. Properly powered and conducted RCT; well-conducted systematic review or meta-analysis of homogeneous RCTs

II-1. Well-designed controlled trial without randomization

II-2. Well-designed cohort or case-control analysis study

II-3. Multiple time-series, with or without the intervention; results from uncontrolled studies that yield results of large magnitude

III. Opinions of respected authorities, based on clinical experience; descriptive studies or case reports; reports of expert committees

Although research design is an important determinant of the quality of information provided by an individual study, the Task Force also recognizes that not all studies with the same research design have equal internal validity (quality).

To assess more carefully the internal validity of individual studies within research designs, the Task Force has developed design-specific criteria for assessing the internal validity of individual studies. The EPC may supplement these with the use of newer methods of assessing quality of individual studies as appropriate.

Figure 5. Stages of Evidence Review Development

These criteria (Appendix VI) provide general guidelines for categorizing studies into one of three internal validity categories: "good," "fair," and "poor." These specifications are not inflexible rules; individual exceptions, when explicitly explained and justified, can be made. In general, a "good" study is one that meets all design-specific criteria. A "fair" study is one that does not meet at least one specified criterion, but has no known important limitation that could invalidate its results. "Poor" studies have at least one "fatal flaw" or multiple important limitations. A fatal flaw is due to a deficit in design or implementation of the study that calls into serious question the validity of its results for the key question being addressed.

The EPC, at its discretion, may include some poor-quality studies in its review. When studies of poor quality are included in the results of the systematic review, the EPC explains the reasons for inclusion, clearly identifies which studies are of poor quality, and states how poor-quality studies are analyzed with regard to good- and fair- quality studies. When poor-quality studies are excluded, the EPC identifies the reasons for exclusion in an appendix table.

4.2.2 Assessing External Validity (Applicability) of Individual Studies

Judgments about the external validity (applicability) of a study pertinent to a preventive intervention address three main questions:

- Considering the subjects in the study, to what degree do the study's results predict the likely clinical results among asymptomatic persons who are the recipients of the preventive service in the United States?

- Considering the setting in which the study was done, to what degree do the study's results predict the likely clinical result in primary care practices in the United States?

- Considering the providers who were a part of the study, to what degree do the study's results predict the likely clinical results among providers who would deliver the service in the U.S. primary care setting?

4.2.2.1 Criteria and Process

The criteria used to rate the external validity of individual studies according to the population, setting, and providers are described in detail in Appendix VII. As with internal validity, this assessment is usually conducted initially by the EPC review team, with input from Task Force members for critically important or borderline studies. This assessment is then used to answer the question, "If the study had been done with the usual U.S. primary care population, setting, and providers, what is the likelihood that the results would be different in a clinically important way?"

4.2.2.2 Population

Participants in a study may differ from persons receiving primary care in many ways. Such differences may include sex, ethnicity, age, comorbid conditions, and other personal characteristics. Some of these differences have a small potential to affect the study's results and/or the outcomes of an intervention. Other differences have the potential to cause large divergences between the study's results and what would be reasonably anticipated to occur in asymptomatic persons or those who are the target of the preventive intervention.

The choice of the study population may affect the magnitude of the benefit observed in the study through inclusion/exclusion criteria that limit the study to persons most likely to benefit; other study features may affect the risk level of the subjects recruited to the study. The absolute benefit from a service is often greater for persons at increased risk than for those at lower risk.

Adherence is likely to be greater in research studies than in usual primary care practice because of the presence of certain research design elements. This may lead to overestimation of the benefit of the intervention when delivered to persons who are less selected (i.e., who more closely resemble the general population) and who are not subject to the special study procedures.

4.2.2.3 Setting

When assessing the external validity of a study, factors related to the study setting should be considered in comparison with U.S. primary care settings. The choice of study setting may lead to an over- or under-estimate of the benefits and harms of the intervention as they would be expected to occur in U.S. primary care settings. For example, results of a study in which items essential for the service to have benefit are provided at no cost to study patients may not be attainable when the item must be purchased. Results obtained in a trial situation that ensures immediate access to care if a problem or complication occurs may not be replicated in a non-research setting, where the same safeguards cannot be ensured, and where, as a result, the risks of the intervention are greater. When considering the applicability of studies from international settings, the EPC often uses the United Nations Human Development Index to determine which settings might be most like the United States.

4.2.2.4 Providers

Factors related to the experience of providers in the study should be considered in comparison with the experience of providers likely to be encountered in U.S. primary care. Studies may recruit providers selected for their experience or high skill level. Providers involved in studies may undergo special training that affects their performance of the intervention. For these and other reasons, the effect of the intervention may be overestimated or the harms underestimated compared with the likely experience of unselected providers in the primary care setting.

4.3 Data Abstraction

Data is abstracted in abstraction forms or directly into evidence tables specific to each key question. Although the Task Force has no standard or generic abstraction form, the following broad categories are always abstracted from included articles:

- Study design

- Study period

- Inclusion/exclusion criteria

- Participant characteristics

- Participant recruitment setting and approach

- Number of participants who were recruited, randomized, received treatment, analyzed, and followed up

- Details of the intervention or screening test being studied

- Intervention setting

- Study results, with emphasis on health outcomes where appropriate

- Individual study quality information, including specific threats to validity

Information relevant to applicability is consistently abstracted (e.g., participant recruitment setting and approach, inclusion/exclusion criteria for the study). The EPC review team uses these general categories, and other categories if indicated, to develop an abstraction form or evidence table specific to the topic. For example, source of funding may be an important variable to abstract for some topics, and performance characteristics are abstracted for diagnostic accuracy studies.

The EPC review team abstracts only those articles that meet inclusion criteria. Abstractions are conducted by trained team members, and a second reviewer checks the abstracted data for accuracy, including data included in a summary table, a meta-analysis, or in calculations supporting a balance sheet/outcomes table. Initial reliability checks are done for quality control.

4.4 Data Synthesis

The evidence review process involves assessing the validity and reliability of admissible evidence at two levels:

- The individual study (discussed in Section 4.2)

- The key question (discussed below)

The Task Force also assesses the adequacy of the evidence at the key question level (discussed in Section 6.2).

4.4.1 Quantitative Synthesis

When the evidence for a key question includes more than a few trials and there appears to be homogeneity in interventions and outcomes, meta-analysis is considered by the topic team. (Please see Section 4.6 about how the EPC may incorporate published meta-analysis and systematic reviews into the Task Force review.) Meta-analysis provides the advantage of giving summary effect size estimates generated through a transparent process. The decision to pool evidence is based on the judgment that the included studies are clinically and methodologically similar, or that important heterogeneity among included trials can be addressed in the meta-analysis in some way, such as subgroup or sensitivity analyses. The EPC review team considers whether a pooled effect would be clinically meaningful and representative of the given set of studies. A pooled effect may be misleading if the trials clinically or methodologically differ to such a degree that the average does not represent any of the trials. Interpretations of pooled effect sizes should consider all sources of clinical and methodological heterogeneity. Similarly, the interpretation of pooled results takes into account the width of the confidence interval and the consequences of making an erroneous assessment, not simply statistical significance. Results of meta-analyses are usually presented in forest plot diagrams.

4.4.2 Qualitative Synthesis

If there are too few studies or data are too clinically or statistically heterogeneous for quantitative synthesis, the EPC review team qualitatively synthesizes the evidence in a narrative format, using summary tables to display differences between important study characteristics and outcomes across included studies for each key question.

4.4.3 Overall Summary of Evidence

The EPC review team provides an overall summary of the evidence by key question in table format (Appendix XII). The table includes the following domains:

- Key question

- Number of studies and observations for each study design

- Summary of findings (quantitative and qualitative findings for each important outcome, with some indication of its variability)

- Consistency/precision (the degree to which studies estimate the same type [benefit/harm] and magnitude of effect)

- Estimates of potential reporting bias (publication, selective outcome reporting, or selective analysis reporting bias)

- Overall study quality (combined summary of individual study-level quality assessments)

- Body of evidence limitations (qualitative descriptions of important limitations in body of evidence from what would have been desired to answer the overall key question)

- Applicability (descriptive assessment of how well the overall body of evidence would apply to the U.S. population based on settings, populations, and other intervention characteristics)

- Overall strength of evidence (brief explanatory text describing deficiencies in the evidence and stability of the findings)

Within key questions, it may be most informative to stratify the evidence by subpopulation or by type of intervention/comparison or outcome, depending on how the Task Force has conceptualized the questions for the particular topic. The EPC review team does not publish an actual grade for the strength of the evidence but rather synthesizes the issues in the bulleted list above for each key question to inform the Task Force's assessments of the adequacy of the evidence (Section 6).

4.5 Other Issues in Assessing Evidence at the Individual Study Level

4.5.1 Use of Observational Designs in Questions of the Effectiveness/Efficacy of Interventions

The Task Force strongly prefers multiple large, well-conducted RCTs to adequately determine the benefits and harms of preventive services. In many situations, however, such studies have not been or are not likely to be done. When other evidence is insufficient to determine benefits and/or harms, the Task Force encourages the research community to conduct large, well-designed and well-conducted RCTs.

Observational studies are often used to assess harms of preventive services. The Task Force also uses observational evidence to assess benefits. Multiple large, well-conducted observational studies with consistent results showing a large effect size that does not change markedly with adjustment for potential known confounders may be judged sufficient to determine the magnitude of benefit and harm of a preventive service. Also, large well-conducted observational studies often provide additional evidence even in situations when there are adequate RCTs. Ideally, RCTs provide evidence that an intervention can work (efficacy), and observational studies provide better understanding if these benefits exist across broader populations and settings.

4.5.2 Ecological Evidence

The Task Force rarely accepts ecological evidence alone as sufficient to recommend a preventive service. The Task Force is careful in its use of this type of evidence because substantial biases may be present. Ecological evidence is data that are not at the individual level but rather relate to the average exposure and average outcome within a population. Ecological studies usually make comparisons of outcomes in exposed and unexposed populations in one of two ways: 1) between different populations, some exposed and some not, at one point in time (i.e., cross-sectional ecological study); or 2) within a single population with changing exposure status over time (i.e., time-series ecological study). In either case, the potential for ecological fallacy is a major concern. Ecological fallacy is the bias or inference error that may occur because an association observed between variables at an aggregate level does not necessarily represent an association at an individual level. In addition, ecological data sets often do not include potential confounding factors; thus, one cannot directly assess the ability of these potential confounders to explain apparent associations. Finally, some ecological studies use data collected in ways that are not accurate or reliable.

The Task Force does not usually accept ecological evidence alone as adequate to establish the causal association of a preventive service and a health outcome because it is not possible to completely avoid the potential for making the ecological fallacy in these studies,. In some very unusual situations, ecological evidence may play the primary role in the Task Force's evidence review and subsequent recommendation (e.g. screening for cervical cancer) , but this is rare. The Task Force may use ecological evidence for background or to develop an understanding of the context for which the preventive service is being considered. In addition, a review of ecological evidence may be warranted when well-known ecological data are used as evidence by others to justify a recommendation for Task Force consideration. The Task Force only rarely considers ecological studies as part of its evidentiary assessment. These circumstances could include when evidence from other study designs is considered inadequate but high-quality ecological evidence, especially studies demonstrating a very large magnitude of benefit or harm, could add important information. When the Task Force critically appraises ecological studies for use to develop a recommendation, the following criteria are used to assess the quality of the studies: 1) the exposures, outcomes, and potential confounders are measured accurately and reliably; 2) known potential explanations and potential confounders are considered and adjusted for; 3) the populations are comparable; 4) the populations and interventions are relevant to a primary care population; and 5) multiple ecological studies are present that are consistent/coherent.

4.5.3 Mortality as Outcome: All-Cause Versus Disease-Specific Mortality

When available and relevant, the Task Force considers data on both all-cause and cause-specific mortality in making its recommendations, taking into account the real and methodological contributions to any discrepancies between apparent and true effect. When a condition is a common cause of mortality, all-cause mortality is the desirable health outcome measure. However, few preventive interventions have a measurable effect on all-cause mortality. When there is a discrepancy between the effect of the preventive intervention on all-cause and disease-specific mortality, this is important to recognize and explore.

Three situations can result in a discrepancy between the effect on disease-specific and all-cause mortality. First, when a preventive intervention increases deaths from causes other than the one targeted by the intervention, all-cause mortality may not decline, even when cause-specific mortality is reduced. This indicates a potential harm of the intervention for conditions other than the one targeted.

Second, when the condition targeted by the preventive intervention is rare and/or the effect of the intervention on cause-specific mortality is small, the effect on all-cause mortality may be immeasurably small, even with very large sample sizes.

Third, when the preventive intervention is applied in a population with strong competing causes of mortality, the effect of the preventive intervention on all-cause mortality may be very small or absent, even though the intervention reduces cause-specific mortality. For example, preventing death due to hip fracture by implementing an intervention to decrease falls in 85-year-old women may not decrease all-cause mortality over reasonable time frames for a study because the force of mortality is so large at this age.

Methodological issues can arise because of difficulties in the assignment of cause of death based on records. In the absence of detail about the circumstances of death, it may be attributed to a chronic condition known to exist at the time of death but which is not, in fact, the direct cause. Coding conventions for death certificates also result in deaths from some causes being attributed to chronic conditions routinely present at death. For example, it is conventional to assign cancer as the primary cause of death to persons with a mention of cancer on the death certificate. The result of these methodological issues is a biased estimate of cause-specific mortality when the data are obtained from death certificates, which may not reflect the true effect an intervention has on death from the targeted condition. Similar methodological issues may occur as a result of adjudication committees.

As indicated above, studies that provide data on all-cause and cause-specific mortality may have low statistical power to detect even large or moderate effects of the preventive intervention on all-cause mortality. This is especially true when the disease targeted by the screening test is not common.

4.5.4 Subgroup Analyses

The Task Force is interested in targeting its recommendations to those populations or situations in which there would be maximal net benefit. Thus, it often takes into consideration subgroup analyses of large studies or studies evaluating particular subgroups of interest. The Task Force examines the credibility of those analyses, however, depending on such factors as: the size of the subgroup; whether randomization occurred within subgroups; whether a statistical test for interaction was done; whether the results of multiple subgroup analyses were consistent within themselves; whether the subgroup analyses were prespecified; and whether the results are biologically plausible.

4.6 Incorporating Other Systematic Reviews in Task Force Reviews

Existing systematic reviews or meta-analyses that meet quality and relevance criteria can be incorporated into reviews done for the Task Force. Existing reviews can be used in a Task Force review in several ways: 1) to answer one or more key questions, wholly or in part; 2) to substitute for conducting a systematic search for a specific time period for a specific key question; or 3) as a source document for cross-checking the results of systematic searches. Quality assessment of existing systematic reviews is a critical step and should address both the methods used to minimize bias as well as the transparency and completeness of reporting of review methods, individual study details, and results. The Task Force has specific criteria for critically appraising systematic reviews (Appendix VI). The EPC may supplement these criteria with newer methods of evaluating systematic reviews and meta-analyses. Relevance is considered at two levels: "Is the review or meta-analysis relevant to one or more of the Task Force key questions for this review?" and "Did the review include the desired study designs and relevant population(s), settings, exposure/intervention(s), comparator(s), and outcome(s)?" Recency of the review is also a consideration and can determine whether a review that meets quality and relevance criteria is recent enough or requires a bridge search.

4.7 Reaffirmation Evidence Update Process

Reaffirmed topics are topics kept current by the Task Force because the topic is within the Task Force's scope and priority and because there is a compelling reason for the Task Force to make a recommendation. Topics that belong in this category are well-established, evidence-based standards of practice in current primary care practice (e.g., screening for hypertension). While the Task Force would like these recommendations to remain active and current in its library of preventive services, it has determined that only a very high level of evidence would justify a change in the grade of the recommendation. Only recommendations with a current grade of A or D are considered for reaffirmation. The goal of this process is to reaffirm the previous recommendation. Therefore, the goal of the search for evidence in a reaffirmation evidence update is to find new and substantial evidence sufficient enough to change the recommendation.

- The topic may be identified for a reaffirmation evidence update by the Topic Prioritization Workgroup and approved for a reaffirmation evidence update by the entire Task Force following the usual process for prioritization, including the annual request for feedback from USPSTF members, partner organizations, and stakeholders. Several Task Force members (one as the primary lead) are identified to take the lead on the topic and serve in the same lead role as on other topic teams, as described in Appendix IV.

- The topic team (review team, AHRQ Medical Officer, and Task Force leads) reviews the previous recommendation statement, evidence review, and background document prepared for topic prioritization. The topic team confirms that the topic is appropriate for a reaffirmation evidence update and then further defines the scope of the literature search. The literature search scope is limited to key questions in the evidence review for the previous recommendation. If there is a need for additional key questions or other expansion beyond the original scope, the topic is referred back to the Topic Prioritization Workgroup for consideration for a systematic review. Any other concerns about whether the topic is appropriate for a reaffirmation evidence update are referred for discussion by the Topic Prioritization Workgroup.

- The topic team consults experts in the field to identify important evidence published since the last evidence review.

- The topic team identifies recommendations from other Federal agencies and professional organizations.

- The topic team performs literature searches in PubMed and the Cochrane Central Registry of Controlled Trials on benefits and harms of the preventive service. Other databases are included when indicated by the topic (e.g., PsycINFO for mental health topics). The benefits and harms to be reviewed are predefined through consultation with the Topic Prioritization Workgroup. In general, the literature search uses the MeSH terms from the previous evidence review, searches for studies published since the last review (at least 3 months prior to the end date of the previous search), is limited to the English language, is limited to humans, and is limited to the journals in the abridged Index Medicus (i.e., the 120 "core clinical journals" in PubMed). These limits may be expanded or modified as needed. For the literature search on benefits, the search is limited to meta-analyses, systematic reviews, and controlled trials; for harms, the search includes meta-analyses, systematic reviews, controlled trials, cohort studies, case-control studies, and large case series. Additionally, the reference lists of major review articles or important studies are reviewed for potential studies to include.

- The topic team defines the inclusion/exclusion criteria. Limits on the size or duration of studies may be used as exclusion criteria. Two independent reviewers select studies to be included based on consensus on whether they meet the inclusion/exclusion criteria. A third reviewer is consulted if consensus is not reached among the two reviewers.

- If substantial new evidence is identified or if the topic team discovers that the evidence base for the prior review may not support a reaffirmation evidence update, the issue is discussed with the Topic Prioritization Workgroup, who decides whether the topic should be addressed with a systematic review.

- The topic team prepares a summary of the findings of the evidence update. The format of this document depends on whether the summary will be submitted to a journal for publication.

- The results of the evidence update, expert discussion, and draft recommendation statement are presented to the leads and then to the entire Task Force for approval.